AI Is No Longer Just Generating Content. It’s Taking Action.

Artificial intelligence has come a long way—it’s no longer just an assistant, but a real operational layer inside modern organizations.

What used to be tools for summarizing content and answering questions have evolved into systems that can perform real tasks. AI agents now retrieve internal data, call APIs, trigger workflows, and interact with external services in real time. Autonomous agents can plan and execute complex tasks independently, making it essential to monitor how these agents behave within dynamic, real-time systems to ensure security and proper functioning.

This shift changes everything.

When AI was limited to generating outputs, mistakes were visible and manageable. A wrong answer could be ignored or corrected. But when AI systems begin taking action—moving data, initiating processes, or interacting with infrastructure—the consequences become immediate and operational. As AI infrastructure expands, the organization’s attack surface also grows, requiring new approaches to monitoring and control to address emerging vulnerabilities and risks.

Organizations are no longer just managing information. They are managing autonomous behavior. Understanding how agents behave during runtime is critical to ensure security and the proper functioning of these systems.

The Industry Focuses on Security – But The Real Gap Is Control.

Most organizations are responding to AI adoption with traditional security strategies. They are strengthening access controls, monitoring systems, and data protection frameworks.

This is necessary—but incomplete.

According to IBM, the global cost of data breaches continues to rise, pushing organizations to invest heavily in cybersecurity. At the same time, Gartner projects that AI-driven automation will rapidly expand across enterprise workflows.

These trends are creating a new kind of operating environment—one where systems interact directly with each other, decisions happen instantly, and automation scales beyond what humans can realistically monitor. As AI becomes embedded in core workflows, the speed and volume of actions increase, making traditional control models less effective.

Security protects systems from external threats. But it does not determine what AI systems are allowed to do internally. While AI systems may operate with legitimate access to data and resources, this increases the complexity of runtime threats and can impact the organization’s overall security posture.

That is a different problem.

When AI Starts Acting, Risk Moves Inside the System

As AI systems gain the ability to act, risk shifts from external attacks to internal behavior.

In real enterprise environments, this is already showing up in subtle but impactful ways. AI agents may retrieve sensitive internal data and pass it to external services without proper filtering. Automated workflows can trigger actions across systems that were never intended to be connected. Even AI copilots, designed to assist, can expose confidential or regulated information, including personally identifiable information (PII), simply by responding to the wrong prompt.

What makes these situations especially challenging is that they don’t look like traditional attacks—they are legitimate actions happening without sufficient control. Another emerging threat is memory poisoning, where malicious manipulation of internal AI knowledge can compromise the integrity of the system during inference.

Why Monitoring and Alerts Are Not Enough

Most organizations rely on visibility tools to manage risk. These include logging systems, anomaly detection, and alerting platforms. However, effective risk management in AI-driven environments also requires runtime visibility to continuously monitor AI systems in real time, enabling detailed tracking of inputs, outputs, and behaviors as they occur.

While useful, these approaches are inherently reactive.

By the time an alert is triggered, the action has already taken place. Data may already be exposed, workflows already executed, and downstream effects already in motion. In AI-driven environments, this delay is critical.

Monitoring tells you what happened

Security helps detect threats

Analyzing API requests is essential to differentiate between normal user activity and malicious attack patterns as part of effective monitoring

But neither prevents unsafe actions from occurring in real time.

To solve this, organizations need a different model—one that operates before execution, not after.

A New Approach: AI Runtime Control

AI runtime control introduces a layer that evaluates and governs AI-driven actions as they happen. Monitoring live AI behavior in real time is crucial to ensure compliance, accountability, and operational control.

At its core, this approach introduces a real-time decision layer into every interaction. Instead of allowing actions to execute immediately, each request is intercepted, evaluated in context, and checked against defined policies. Enforcing controls in real time during execution helps protect production environments by ensuring that only compliant actions proceed. Only then is a decision made—whether to allow the action, stop it entirely, or modify it to reduce risk.

This shifts AI from an unpredictable system into one that operates within controlled, enforceable boundaries.

AI Runtime Control vs AI Security: What’s the Difference?

AI runtime security is one of the core capabilities required to safeguard AI applications during active operation. Runtime control uses guardrails to protect the AI model from attacks and undesired responses, ensuring that both the model and the application remain secure.

Security focuses on protecting systems. Runtime control focuses on governing behavior.

Key features of an AI runtime security solution include continuous visibility, enforcement mechanisms, low latency, and adaptability within AI architectures.

What a Control Layer Actually Does

A control layer introduces a real-time decision point into every AI-driven interaction.

When an AI system attempts to perform an action—such as accessing data or calling an API—that request is intercepted and evaluated. The system considers factors like data sensitivity, destination risk, and contextual intent. To improve performance and accuracy, AI models can be tailored to a specific task within security platforms, such as vulnerability prediction, attack mapping, or response guidance.

Based on this evaluation, the system enforces one of three outcomes:

Allow — the action proceeds normally

Block — the action is stopped entirely

Constrain — the action is modified, such as redacting sensitive data or limiting parameters

This happens instantly, without requiring manual review for each decision, allowing AI systems to operate safely without slowing them down.

At the same time, every action is recorded, creating a clear and traceable history of what occurred and why—critical for both operational visibility and compliance.

Where Most AI Security Solutions Fall Short

Many existing tools attempt to extend traditional security models into AI environments. However, they often fail to address how AI systems actually operate.

Most existing solutions were not designed for how AI systems actually operate today. They tend to focus on APIs rather than full workflows, lack integration with agent-based architectures, and rely heavily on monitoring after the fact. As a result, organizations may understand what happened—but still have no way to control it.

Additionally, shadow AI and third-party tools can introduce significant risks, especially when AI apps are deployed without proper oversight or integration into security frameworks. Training data, as a core component of the AI stack alongside cloud services, APIs, and vector databases, can be a potential exposure point for security vulnerabilities and attack vectors such as data theft or poisoning.

As a result, organizations may gain visibility into AI activity—but still lack control over it.

Governance and Compliance: The Overlooked Imperative

As AI systems and AI agents become central to business operations, governance and compliance have emerged as critical pillars of AI security. The rapid adoption of artificial intelligence brings not only new capabilities, but also new responsibilities—especially when it comes to managing sensitive data and ensuring responsible AI behavior.

Traditional security controls are no longer sufficient to address the complex risks posed by modern AI. Sensitive data exposure, prompt injection attacks, and vulnerabilities within AI models can all lead to significant data exposure and operational risk. These challenges demand robust governance frameworks that go beyond technical safeguards, providing clear policies and oversight for how AI systems access, process, and share information.

Effective AI governance solutions are designed to manage risk at every stage of the AI lifecycle. This includes setting boundaries for data access, monitoring for unauthorized actions, and ensuring that AI agents operate within defined ethical and regulatory guidelines. By implementing comprehensive governance solutions, organizations can proactively address issues like prompt injection and model misuse, reducing the likelihood of unintended data leaks or compliance violations.

Ultimately, strong AI governance is not just about meeting regulatory requirements—it is about building trust in AI applications and ensuring that artificial intelligence delivers value without compromising security or compliance. As the regulatory landscape evolves and frameworks like the EU AI Act come into force, organizations that prioritize governance will be better positioned to manage risk, protect sensitive information, and maintain a resilient security posture in the age of AI.

How Data443 Approaches This with Vaikora

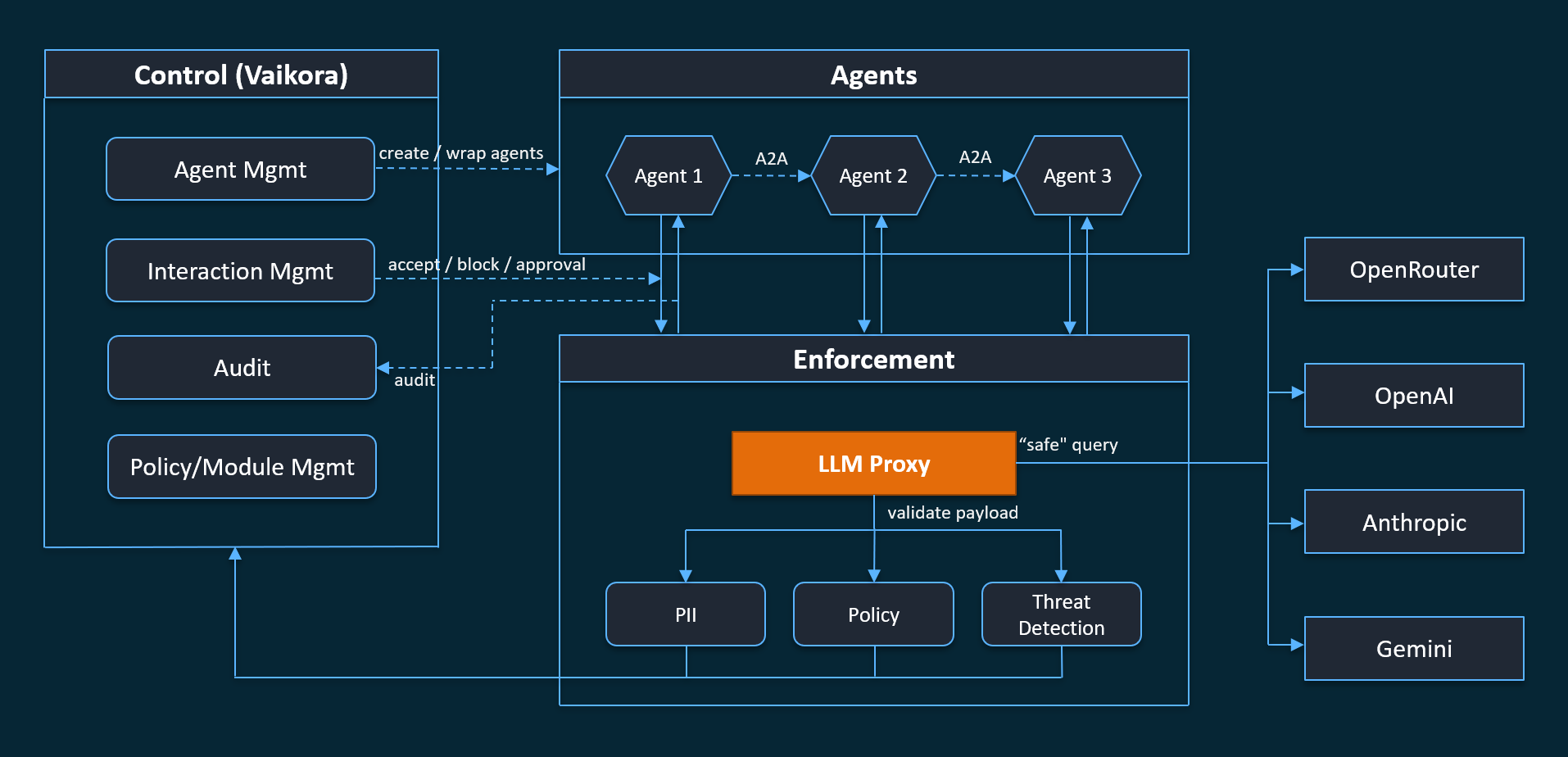

Data443 aquired Vaikora to address this gap directly.

Rather than adapting legacy security tools, Vaikora introduces a control layer designed specifically for modern AI systems. It operates in an acquired line, ensuring that every action taken by an AI system is evaluated before it is executed.

This allows organizations to:

enforce policies consistently across AI systems

control how data and tools are accessed

govern multi-step workflows and agent interactions

maintain visibility into every decision

The result is a shift from reactive security to proactive control.

Two Ways to Adopt AI Runtime Control

One of the challenges with new infrastructure is adoption. Organizations need a way to test, validate, and scale without introducing unnecessary complexity.

These approaches are designed to support AI deployment and runtime control at enterprise scale, ensuring security and governance across diverse AI workloads.

Vaikora addresses this with two complementary approaches.

1. MCP Data Proxy (Open Source)

The MCP Data Proxy provides an accessible starting point.

It allows teams to introduce control into AI workflows by routing agent interactions through a centralized layer. From there, organizations can apply basic policies and observe how AI systems behave in real environments.

With this approach, teams can:

quickly validate the concept of runtime control

restrict access to tools and APIs

redact sensitive information

gain visibility into agent behavior

The proxy also enables monitoring of user input, analysis of tool usage, and can be tailored for specific tasks to enhance security and control.

Because it is open source, it lowers the barrier to entry and enables rapid experimentation.

2. Vaikora Control Plane (Enterprise)

For organizations that need full governance, the Vaikora Control Plane expands these capabilities into a centralized system.

It provides:

policy management across environments

versioning and controlled rollout of rules

approval workflows for sensitive actions

long-term audit and reporting capabilities

integration with SIEM and logging systems

The Control Plane is designed for production environments, complements pre-deployment testing, and leverages service level objectives to provide targeted alerts and operational assurance.

This creates a scalable model for managing AI systems across complex environments.

What Organizations Gain from AI Runtime Control

Introducing a control layer changes how organizations manage AI risk.

Instead of reacting to incidents, teams can prevent them. Instead of relying on fragmented tools, they can enforce consistent policies across all systems.

In practical terms, organizations gain:

reduced risk from AI-driven actions

real-time enforcement of policies

improved visibility into system behavior

stronger compliance and audit readiness

lower operational burden on security teams

Integrating threat detection and threat intelligence with automated responses is critical for runtime security to mitigate risks before they escalate.

This is not just an incremental improvement—it is a shift in how AI is governed.

The New Standard for Enterprise AI Security

The conversation around AI is changing.

It is no longer enough to ask whether systems are secure or whether data is protected. Those questions remain important, but they do not address the full scope of risk introduced by autonomous systems.

As generative AI, large language models, and deep learning systems become more prevalent, the ability to control AI actions and interpret outputs in natural language will be essential for building trust and ensuring responsible AI use.

The more important question is now:

Do we control what our AI systems are allowed to do?

Because as AI continues to act on behalf of organizations, control is what turns capability into trust.

Ready to Put a Control Layer on Your AI?

Vaikora gives security teams real-time enforcement, behavioral analytics, and immutable audit logs for every AI action in your environment.

Frequently Asked Questions

What is Data443 AI Runtime Control & Enforcement Platform?

The Data443 AI Runtime Control & Enforcement Platform is a real-time control layer designed to govern how AI systems operate across enterprise environments. Powered by Vaikora, it evaluates every AI-driven action before execution, ensuring that data access, API calls, and workflows follow defined security and compliance policies. This approach allows organizations to move beyond visibility and enforce decisions at the exact moment AI systems act.

How does Vaikora enhance AI security for enterprises?

Vaikora enhances AI security by introducing real-time enforcement rather than relying only on detection and alerts. It intercepts AI actions as they happen, analyzes context such as data sensitivity and destination risk, and enforces outcomes like allow, block, or constrain. This ensures that AI agents operate safely within enterprise environments, preventing data exposure and unauthorized actions before they occur.

Why is AI runtime control critical for modern SOC teams?

AI runtime control is critical for SOC teams because traditional security tools are reactive and cannot prevent AI-driven actions in real time. As AI agents increasingly interact with data, APIs, and external systems, the risk shifts from external threats to internal behavior. Data443 addresses this gap by enabling SOC teams to enforce policies instantly, reduce manual investigation, and maintain full visibility into AI activity without adding operational overhead.

How does Data443 integrate AI runtime control with existing security tools?

Data443 integrates AI runtime control directly into existing security ecosystems, including SIEM, logging platforms, and endpoint protection tools. Vaikora acts as a control layer that works alongside these systems, adding real-time enforcement to existing detection and monitoring capabilities. This allows organizations to extend their current security investments while gaining the ability to control AI behavior across environments.

What business value does Data443 and Vaikora deliver?

Data443 and Vaikora deliver measurable value by reducing risk from AI-driven actions, improving compliance readiness, and enabling organizations to safely scale AI adoption. By enforcing decisions before execution, organizations can prevent data leaks, control automated workflows, and reduce the burden on security teams. This shifts security operations from reactive response to proactive control, aligning AI usage with business and regulatory requirements.